Why OpenAI should have never launched Sora

This doesn't mean the AI bubble is about to pop.

Last week, OpenAI announced that they will be shutting down the Sora app on April 26th. This makes sense, because Sora was a bad idea.

Sora was still innovative. The Sora 2 video model was funny and made social media-style videos, rather than boring “filmic” AI videos of the time. The Cameo feature made deepfakes fun, and though I’m still not over how damaging Cameo was, it gave people FOMO and built hype around the Sora app.

AI videos are expensive, and OpenAI paid for a lot of them. Since Sora was like TikTok if it only had AI videos, the app didn’t appeal to normal people. So, Sora’s users were incentivized to take those videos and post them literally everywhere else. Sora subsidized slop for everyone.

I don’t think that’s the main reason OpenAI is shutting down Sora, though. The Sora 2 video model wasn’t competitive anymore, and OpenAI would have to invest into keeping up. Instead, they’re focusing their attention on world models and large language models, which they should have never been distracted from in the first place.

The Sora models are outdated… already

OpenAI will also shut down Sora’s API on September 24th. Through the API, developers and creators can pay to generate AI videos with the Sora 2 models.

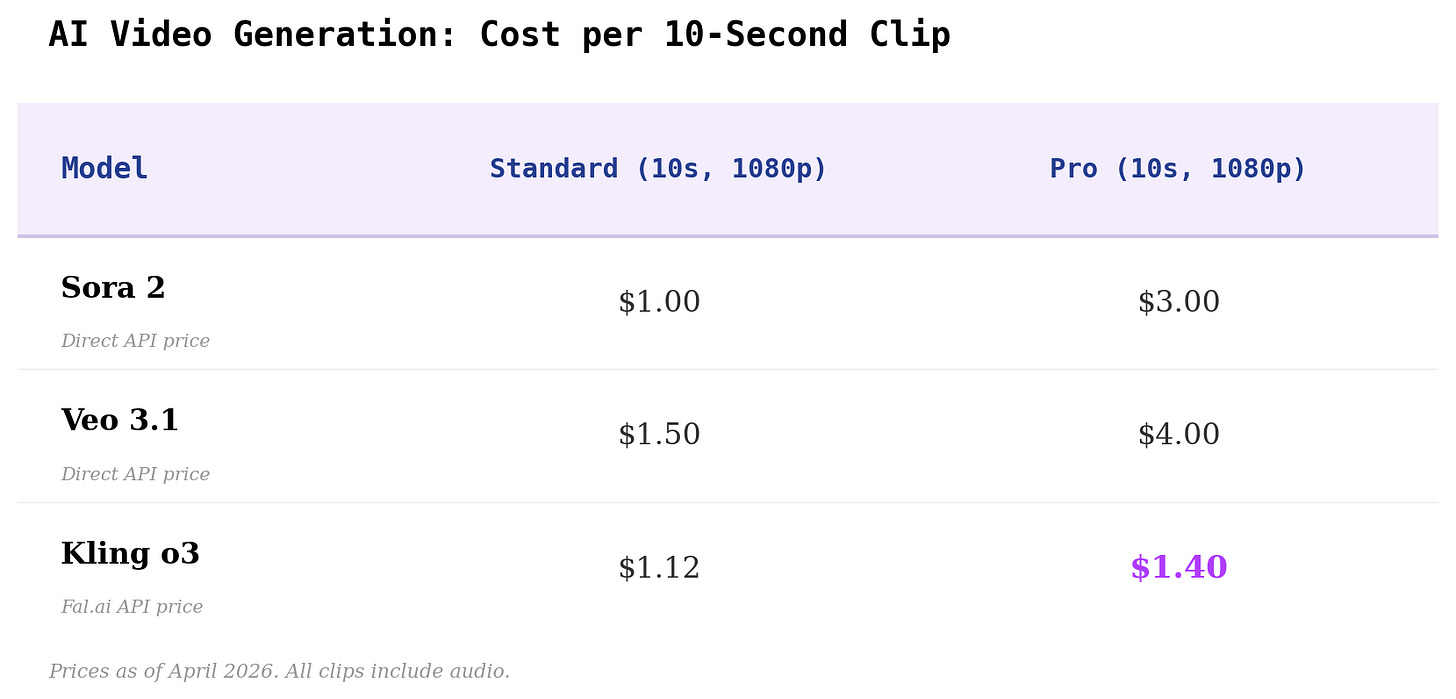

The baseline Sora 2 video, which is the default video inside the Sora app, is a 10-second, 720p video that costs $1.00 to create. In other words, any time you make a “free” video inside the Sora app, OpenAI “pays” $1.00 of API resources to create it for you. If you would rather get a video without a watermark, you can pay $1.00 through the API (or use a website like fal.ai or Higgsfield to do it for you).

Moreover, Sora 2 videos don’t look great by today’s standards. Mind you, Sora 2 was released barely six months ago. It’s fine for casual AI slop, but even creators who make “good” AI slop want something better now. But, the improved Sora 2 Pro model costs $3.00 for a 10-second video at 1080p quality.

Another mainstream AI video model, Google’s Veo 3.1, is more expensive than Sora. In my opinion, Veo is even worse. But Kling’s o3 video model is better quality than either Sora or Veo, and it’s cheaper.

Below is a benchmark I run with video models that I call “Four people talking.” Each character is supposed to introduce themselves, from left to right.1 It tests prompt adherence. Sora mixes up lines, and Veo mixes up lines while adding in an extra person. Kling o3 is the first model to ever pass this test.

The most popular types of AI videos, and those that are most profitable for the creators and grifters who make them, are rarely made with Sora 2 anymore. Alibaba’s Wan video model makes sexual content. I’m not sure which video model made AI Fruit Love Island, but it certainly was not Sora 2.

Sora’s models specialized in social media videos like fake Ring doorbell cameras, bizarre scenarios, and engagement slop. Kling’s o3 model can do these better and cheaper, so I’ve seen a lot of slop pages make the switch. And with pressure from the upcoming Seedance 2.0 — which is still mysteriously delayed in the US and Japan — Open AI needed Sora 3 to come very quickly.

To be clear, the talented Sora team might have made Sora 3 the best of them all. But at what cost? Instead, the team will refocus on virtual world models. If you read the original Sora technical report from February of 2024, that was their original goal for Sora all along.

The AI video race doesn’t matter

Sora’s closure is not a signal that the AI bubble is about to pop. It’s a signal that AI video generation is a shaky business model without the upside of large language models. During Sora’s 208-day lifespan, OpenAI lost a lot of ground to Anthropic where it matters. Anthropic never got distracted by AI video.

There’s little doubt in my mind that “AI” is bad for the world, and that there’s an “AI bubble.” I’m not optimistic about an AI-dominated future. But, I often see these legitimate criticisms bundled with the assertion that “AI” is still bad, or that only stupid people use AI.

This isn’t a legitimate criticism anymore. Large language models have improved a lot in the past six months. I used to be very skeptical that AI would ever be useful. But, after seeing Claude’s Opus 4.5, released in November of 2025, and its current iteration at Opus 4.6, I’m confident that LLMs will be economically useful for a long time.

When OpenAI released Sora in October, LLM progress felt stagnant. Since then, Gemini 3’s release triggered a “Code Red” memo at OpenAI, and now Anthropic is probably winning at code generation overall.

GPUs can be used to train video models, or large language models. The highly-publicized $1 billion deal between OpenAI and Disney will fall through, but that money is nothing compared to the money OpenAI needs for large language models.

The bubble might still pop. OpenAI’s financials are still disconnected from reality. But there is industry-wide momentum in coding and enterprise solutions, and OpenAI can’t waste GPUs and resources on Sora any longer.

The prompt for “Four people talking:”

Four people are lined up left to right standing in front of a restaurant entrance in the parking lot. Daytime. Jane: Middle-aged woman, artist type. 50 years old, olive-toned skin with faint freckles across her cheeks. Silver-streaked dark hair is twisted into a loose bun. Round tortoiseshell glasses that sit slightly askew, a faded indigo linen shirt smudged with ochre and cobalt paint, and rolled khaki pants flecked with dried pigment. Adrian: Teenage boy, skater. 17 years old, lanky frame, tan skin dusted with sun freckles. Hair is bleached at the tips, falling into his eyes in uneven waves. He wears a threadbare black hoodie with a cracked white logo, frayed denim shorts, and mismatched socks pulled into beat-up Vans. Richard: Elderly man, retired worker. 75 years old, leathery tan skin. His white beard is neatly trimmed, his pale blue eyes sharp under heavy brows. He wears a faded navy work shirt over a white t-shirt, sleeves rolled up to show forearms roped with veins and age spots. His hands are calloused, a gold wedding ring dulled, black work boots, distressed but neat jeans. Mikayla: Young woman, urban professional. Late 20s, East Asian descent, sleek bob. She wears a tailored charcoal blazer over a cream silk blouse, slim black trousers, and pointed leather loafers. A single minimalist gold earring curves along her ear, and her nails are painted a muted gray-green to match her structured leather bag. They introduce themselves left to right: Jane: “OK, I’ll start. Hi, I’m Jane” Adrian: “Sup, I’m Adrian.” Richard: “Hello, my name is Richard.” Mikayla: “And I’m Mikayla!”