YouTube's AI moderation is backfiring

These real artists have to fight a multi-front battle against AI.

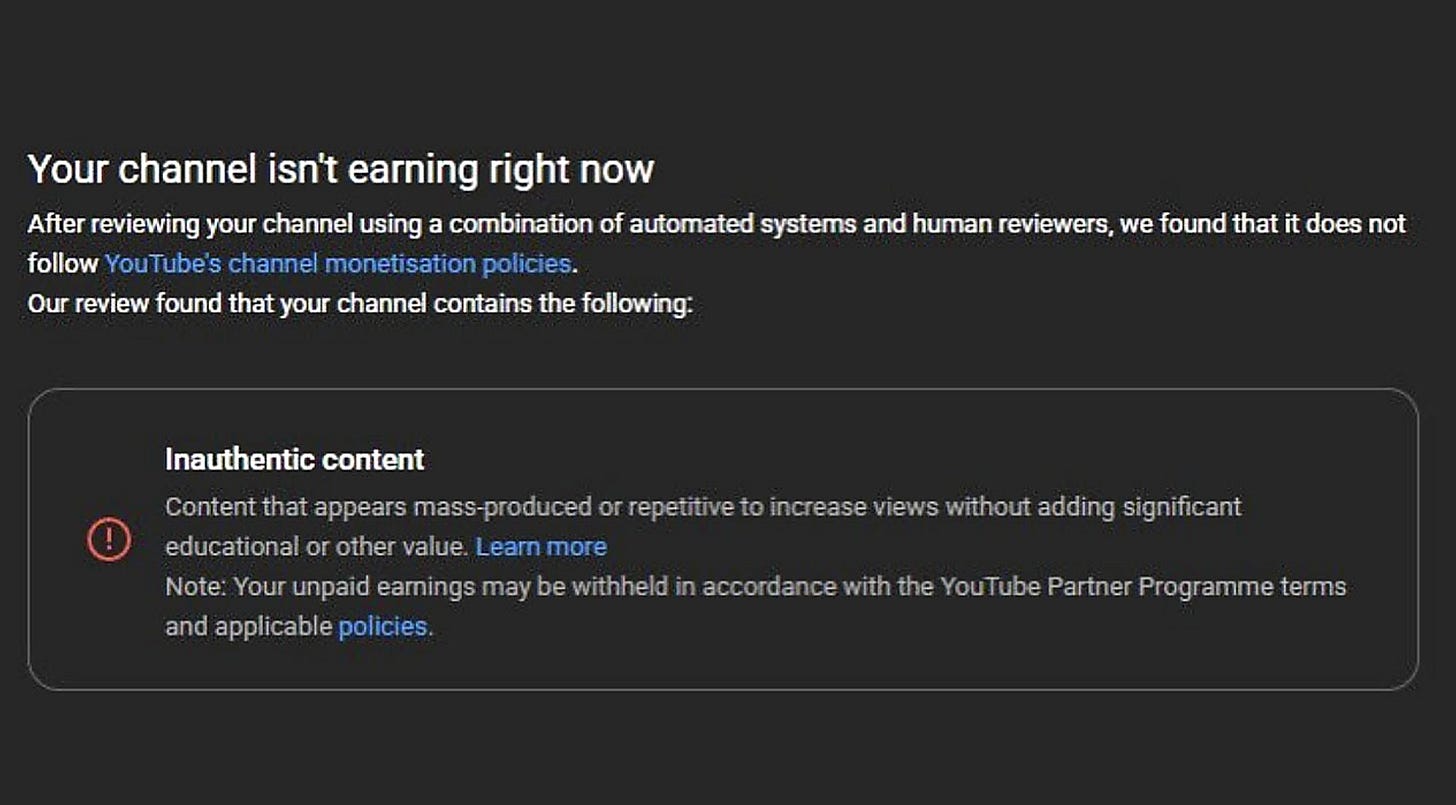

A large collection of stop motion and animation artists had their channels demonetized or banned after YouTube flagged their content as inauthentic. These accounts, which do not use AI, appear to be caught up in a wave of enforcement as YouTube tries to restrict inauthentic content.

Creators have struggled appealing these decisions, and many have been unable to get the penalty reversed. Some have needed to turn to public statements to get YouTube’s attention.

Peter Heacock and Marie Hart are stop motion animators who run Tiny Grandma, where they use a puppet inspired by Marie’s mother to teach viewers how to make Korean cuisine. YouTube demonetized their channel citing “content that appears mass-produced or repetitive, without adding significant educational or other value”.

Peter and Marie were baffled. In a response video, Peter said “I don’t think you can get any more hands-on or low-tech than frame-by-frame moving a puppet and having it come to life.” They submitted an appeal right away, and YouTube reversed their decision a few days later.

But that speedy resolution isn’t a given. Chris Plim runs Plim Stopmotion, where he posts stop motion tutorials. After multiple failed appeals through YouTube’s system, he resorted to making his own response video. The channel was finally remonetized shortly after.

Corey Nikolaus runs EraserDread, which was entirely removed by YouTube in January, with the platform citing their inauthentic content policy. YouTube didn’t stop there, removing two other channels linked to his account. One of the accounts called “Monster Circus” was removed before he could upload content to it. Corey has effectively been blocked from using YouTube as a creator. He has submitted multiple appeals, with no success.

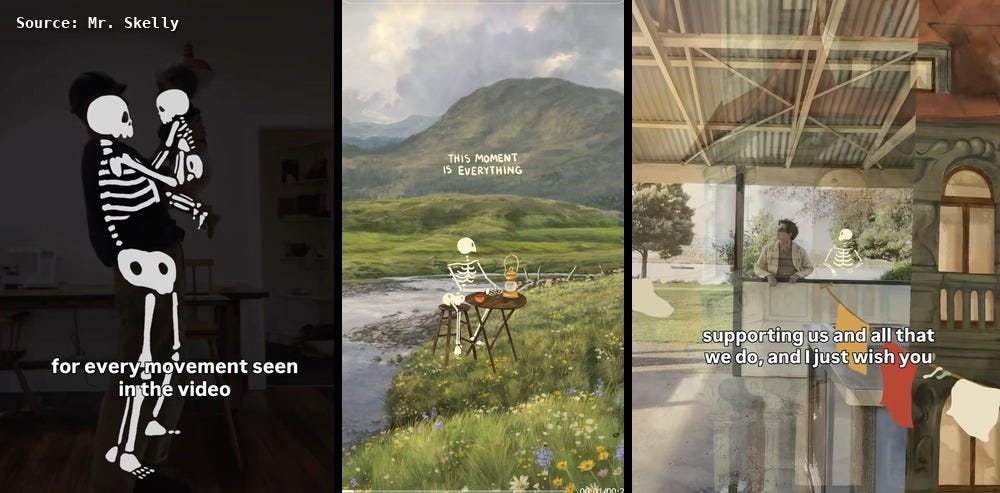

These aren’t isolated incidents. The Mr Skelly account has also been demonetized, despite behind-the-scenes videos proving its authentic content. Same with The Good Times Are Killing Me, another popular digital animation page. There are multiple Reddit threads of real creators struggling to get their accounts back, all reporting being labeled as inauthentic accounts.

As AI videos copy handmade and digital art styles at scale, audiences are pushing back and expecting platforms like YouTube to moderate AI content. But their methods are backfiring, harming the creators they should protect.

These broad-brush attempts to protect audiences from deceptive material accelerate the encroachment of AI-generated media. Animation and stop motion communities, already competing with AI media for viewers, are now fighting faulty moderation efforts as well.

YouTube did not respond to a request for comment. But as they moderate content at scale, combing through an average of 20 million videos per day, it’s safe to assume that AI moderation is used to keep up with the volume.

But these AI systems are not sufficiently context-aware to make critical judgements about authenticity. These systems need human oversight and intervention, especially when livelihoods are at stake.

If our small team at Riddance can conduct brief investigations to validate if creators are real, should we not expect YouTube to have a similar capacity? As a profitable organization who releases AI video generators right into their platform, they need to learn from this mistake and allocate more resources towards authenticity review.

In the meantime, consider following these artists here:

TikTok:

@itsmrskelly

@tiny_grandmom

@plim.stopmotion

@good_times_show

@chris_plim

@good_times_show

@tinygrandmom

@1924us [Mr Skelly]

@eraserdread