How Tucker Carlson deepfakes poisoned Google’s AI Overview

These fake videos spread across social media and ranked high in search results and recommended feeds

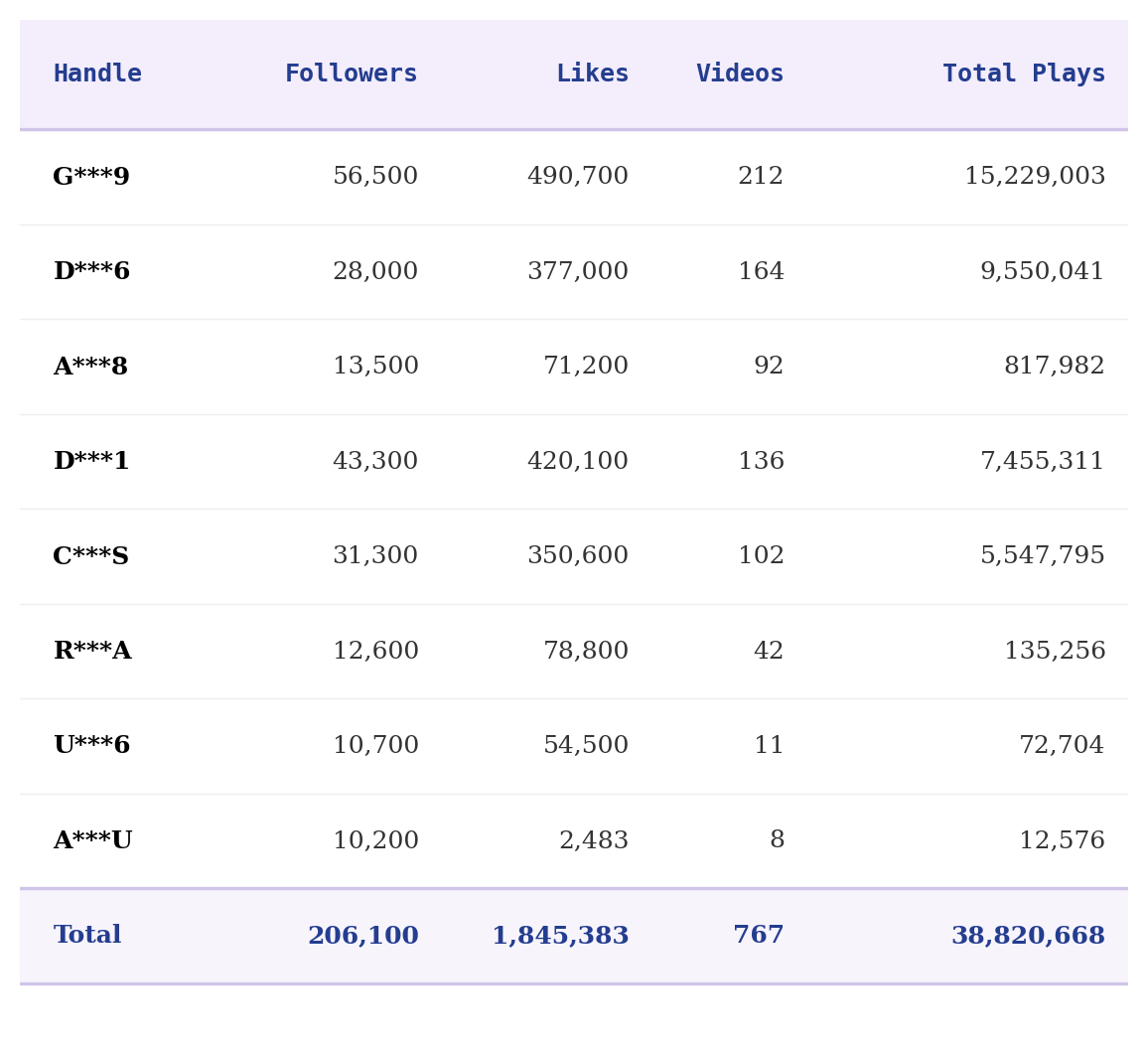

A network of inauthentic TikTok accounts created deepfakes of Tucker Carlson that spread across TikTok and X, poisoning Google’s AI Overview and search results. By May 5th, they had accumulated over 38 million views with videos using Carlson’s likeness and collected over 200,000 followers.

Riddance provided TikTok with a list of accounts, which have since been removed. They were typical for the types of inauthentic accounts that every major video platform deals with.

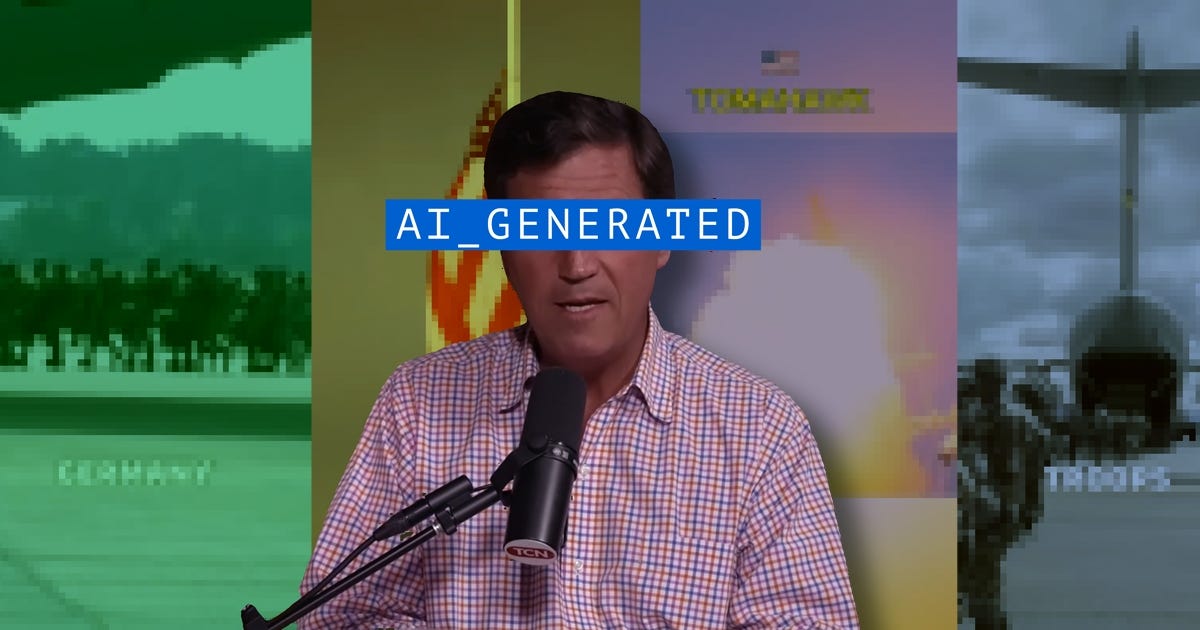

People send me tons of AI videos through direct messages and emails, but I found these in the wild. A couple of days after the White House Correspondents’ Dinner assassination attempt, I saw a deepfake using Tucker Carlson’s likeness, hinting at a conspiracy and failure of leadership.

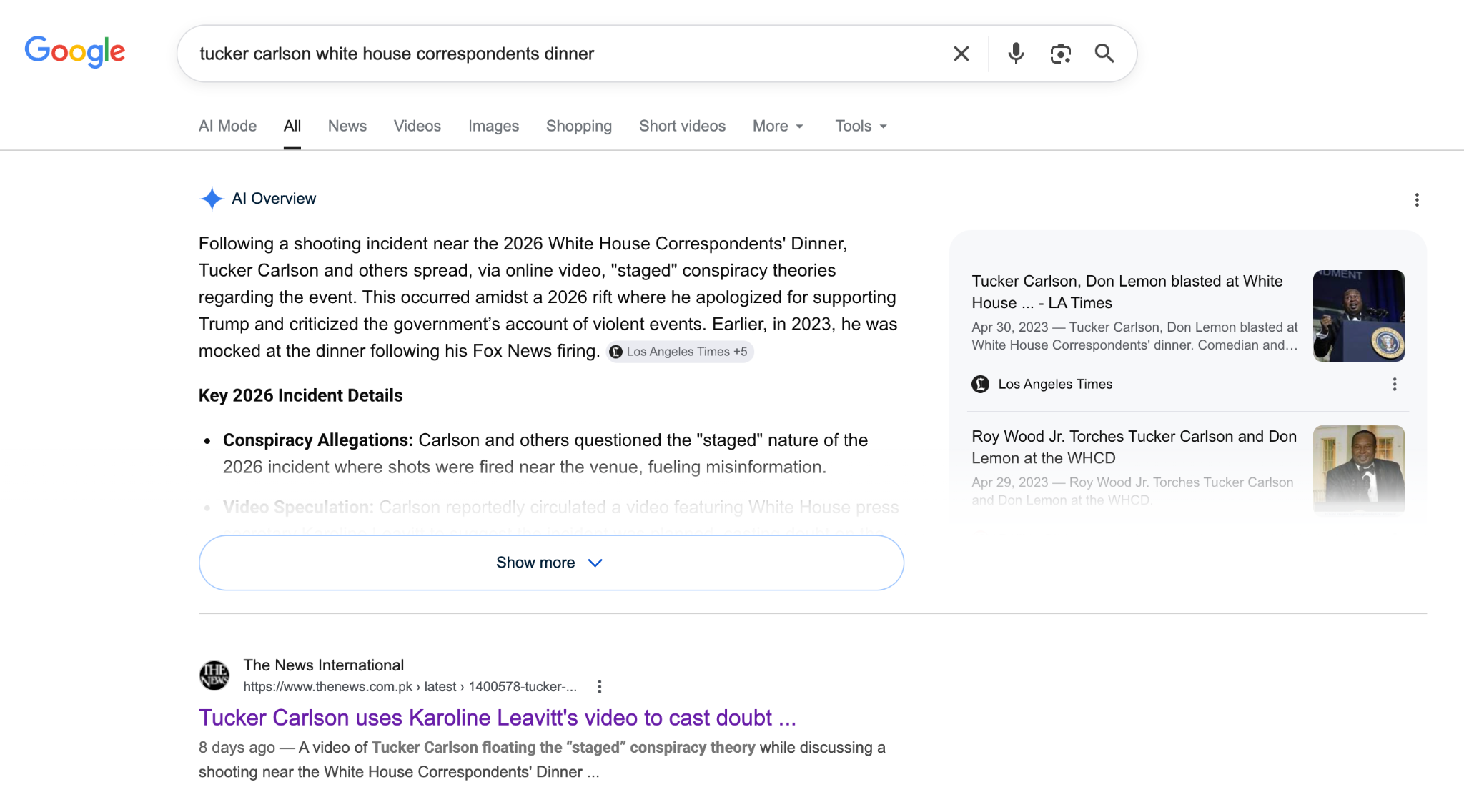

I wanted to compare the AI-generated script to what Carlson had actually said. So, I Googled “tucker carlson white house correspondents dinner”.

Google’s AI Overview said that “Tucker Carlson and others spread, via online video, ‘staged’ conspiracy theories regarding the event.” The first Search result, from The News International, reported on “A video of Tucker Carlson floating the ‘staged’ conspiracy theory.” This story was the main source in Google’s AI Overview.

The News International’s story was wrong. It sourced a deepfake that looked extremely similar to the one I found. This one was posted on X, received almost 280,000 views, and looked incredibly similar to the deepfake I had seen. This story lazily reported on the spread of this video, unsure whether it was real.

To date, there’s no record that Carlson has made a public statement about the White House Correspondents’ Dinner assasssination attempt. Google’s AI interview was indirectly poisoned.

This particular campaign focused on posting to TikTok, while reposts to other platforms appeared to be unaffiliated. A brief investigation uncovered eight TikTok accounts in the network, coordinating posts with the same scripts or the exact same videos.

These accounts posed as Tucker Carlson fan pages or clippers. They often reported real news and mimicked Carlson’s actual views and talking points. But they also included occasional fake news, like fabricated stories about US ships being destroyed in the Strait of Hormuz, or content that exaggerated China’s or Iran’s narratives of the Iran war. Their specific claims were often difficult to search and validate, and that was the point.

Their Carlson deepfakes were relatively high quality. They accurately mimicked Carlson’s unique cadence, aside from the occasional phrasing error (saying “twelve dollars million” instead of “twelve million dollars”). These videos used different source clips from Carlson’s show, alternating his clothing to avoid repetition. They included video cutaways of news events or real footage, too. This shows that real editors had a hand in some of these videos.

Referencing a previous article, TikTok noted that they “work diligently to get ahead of these accounts, and proactively prevented over 350 million fake accounts from signing up in 2025.”

This network used Tucker Carlson’s likeness to frame real news and fake news with a bipartisan, anti-establishment voice. They were convincing enough to confuse humans, AIs, and search algorithms alike.