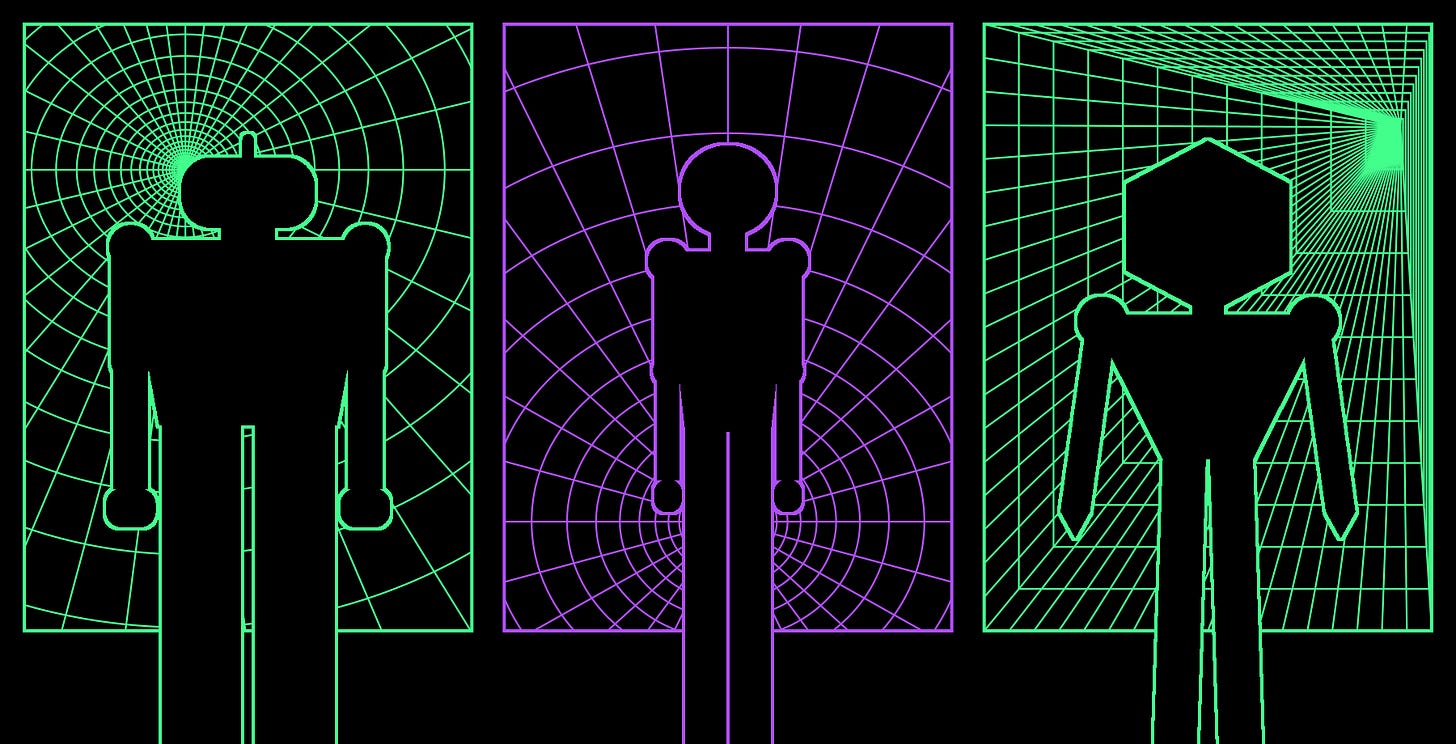

The Accountability Gap

When AI breaks the rules, who cares?

At Riddance, we’ve observed a pattern in our investigations: When two or more AI systems overlap, the guardrails designed to reduce harm and prevent Terms of Service (ToS) violations stop working like they should. This failure is not an edge case but rather points to something structural about the difference between human labor and AI labor when accountability matters. Now that AI is increasingly capable of replacing tasks that once required human minds to carry out, we must scrutinize the extent to which the accountability structures that inform human actions are applicable to AIs acting in those same capacities.

A double-sided failure

In our investigation into AI-generated sexualized videos of minors on Instagram, we identified content that, in our opinion, clearly violates Meta’s policies on child sexual exploitation. But given how the videos were made—stitching together AI scenes that were not necessarily problematic in isolation—the videos could have been generated by commercial models without hitting guardrails. And, because platforms automate a lot of moderation, the pedophilic innuendos in these videos went unnoticed. Human beings could have caught this behavior, but the existing systems failed.

AI-generated news accounts that spread false or misleading information about the most pressing current events present another opportunity for accountability failure. These channels impersonate real newscasters but inherit none of the consequences when the stories are false or drastically overstated. While platform-specific moderation flags much of this content for demonetization, if the actor has a political motivation rather than a financial one, these actions will barely deter them. The AI models making the videos are not jailbroken, and technically none of the content is in violation of platform-side ToS. From the perspective of the AI either generating or moderating the content, there is no problem, yet clearly this content is problematic, as evidenced by platforms trying to manually remove it.

This double-sided failure is a microcosm of the accountability gap writ large. The AI that made the content had no mechanisms in place to understand the harms it perpetuated, and the AI moderating the content had no general concept of what those harms look like in the first place. AI is not sufficiently sensitive to reasons for the actions it does or does not take, whether the domain is maintaining critical infrastructure or removing CSAM-adjacent content from short-form video algorithms. Right now, we are hyper-focused on outcomes in AI systems and not paying enough attention to reasons that guide actions taken to effect outcomes. In order to have safe AI we desperately need both.

Accountability requires sensitivity to reasons

Human beings constantly navigate an intricate space of reasons. Some examples include: choosing not to invite a friend to dinner because you know they do not get along with another invited guest; making a plan to help break a bad habit; changing behavior because someone asked you to do something differently. Two distinctive qualities of the reasons guiding human action are how broad they can be and how connected they are to the effects those actions have in the world. The narrowness of the reasons guiding AI actions coupled with their inability to perceive downstream consequences are just two of the key differences between reasons guiding human action and the reasons guiding AI action.

Reasons for actions and accountability are intimately linked. The reasons that guide our actions are often backstopped by accountability, and accountability itself always requires sensitivity to reasons. Social rules, laws, and guidelines are norms that we adhere to if we agree with the reasons behind them. Of course, sometimes rule-following happens because we fear the consequences of breaking a rule—if I drive too fast I’ll get a ticket—but the best rules are generally those that we follow because we assent to reasons supporting them, not to an auxiliary reason riding alongside them. Whether we adhere to norms because we are self-interest maximizers (consequence fearing) or because we think some norms are themselves worthy of adherence, we have a sense of accountability as we make a decision about following them.

When training an AI model, all sorts of rewards and feedback get used in an effort to minimize a loss function or optimize for some behavior. In addition to training for performance, this is an attempt to bake in awareness of specific guardrails or norms. But once a model is deployed, that feedback mechanism vanishes. The real, messy world of interconnected reasons and norms has no purchase on the decision-making calculus of a model. Furthermore, beyond their context window, AI models are completely blind to downstream effects of their actions.

Over the course of a session, one can tweak the prompt context to adjust for more or less desirable outcomes, but there is no reflective moment where an AI can pause and assess the stakes or meaningfully feel responsible. It’s not even clear what such a reflective moment would look like for an AI, and as a result AI models lack accountability in a very structural way.

This lack of accountability might seem like a good thing. After all, AI is not human, and deflecting responsibility for actions to non-human systems might look like cop-out at best and a deliberate tactic for avoiding consequences at worst. But if we are willing to offload increasing amounts of human labor—much of it increasingly impactful—to AI, then we have to seriously confront the accountability gap that follows.